Nvidia DeepStream is an AI Framework that helps in utilizing the ultimate potential of the Nvidia GPUs both in Jetson and GPU devices for Computer Vision. It powers edge devices like Jetson Nano and other devices from the Jetson family to process parallel video streams on edge devices in real-time.

DeepStream uses Gstreamer pipelines (written in C) to take input video in GPU which ultimately processes it faster for further processes.

Video analytics has a vital role in the transportation, healthcare, retail, and physical security industries. DeepStream by Nvidia is an IVA SDK that enables you to attach and remove video streams without affecting the rest of the environment. Nvidia has been working on improving its deep learning stack to provide developers with even better and more accurate SDKs to create AI-based applications.

DeepStream is a bundle of plug-ins used to facilitate a deep learning video analysis pipeline. Developers don’t have to build the entire application from scratch. They can use the DeepStream SDK (open source) to speed up the process and reduce the time and effort invested into the project. Being a streaming analytics toolkit, it helps create smart systems to analyze videos using artificial intelligence and deep learning.

DeepStream is flexible and runs on discrete GPUs (Nvidia architecture) and chip platforms with Nvidia Jetson devices. It helps easily build complex applications with the following:

Multiple streams

Scaling is easy when using DeepStream as it allows you to deploy applications in stages. This helps maintain accuracy and minimize the risk of errors.

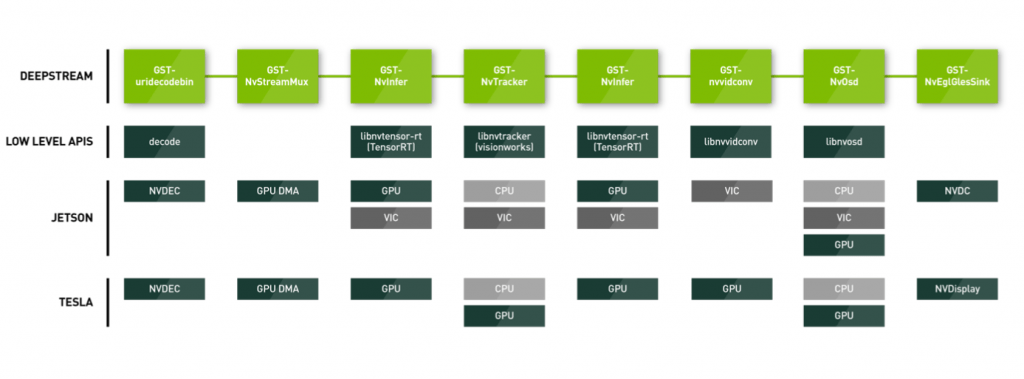

DeepStream has a plugin-based architecture. The Graph-based pipeline-interface allows high-level component interconnection. It enables heterogeneous parallel processing using multithreading on both GPU and CPU.

Here are the major components of DeepStream and their high-level functions –

It is generated by graph and it is generated at every stage of the graph. Using this we can get many important fields like Type of Object detected, ROI coordinates, Object Classification, Source, etc.

The decoders help in decoding the input video (H.264 and H.265). It supports multi-stream simultaneously decoding. It takes Bit depth and Resolution as parameters.

It helps in accepting n input streams and converts them into sequential batch frames. It uses Low Level APIs to access both GPU and CPU for the process.

This is used to get inference of the model used. All the model related work is done through nvinfer. It also supports primary and secondary modes and various clustering methods.

It converts format from YUV to RGBA/BRGA, scales the resolution and does the image rotation part.

It uses CUDA and is based on KLT reference implementation. We can also replace default Tracker with other trackers.

It manages the output videos, i.e kind of equivalent of open cv’s imshow function.

It manages all the drawables on the screen, like drawing lines, bounding boxes circles, ROI etc.

The sink as the name suggest is last end of pipeline where normal flows end.

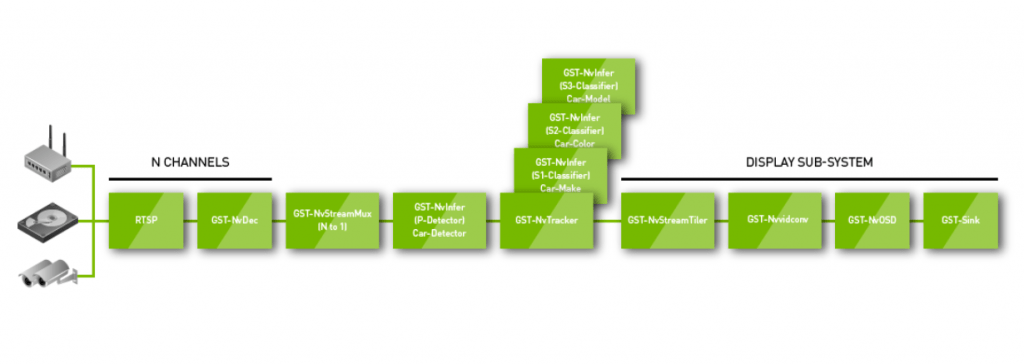

Decoder -> Muxer -> Inference -> Tracker (if any) -> Tiler -> Format Conversion -> On Screen Display -> Sink

The DeepStream app consists of two parts, one is the config file and the other is its driver file (can be in C or in Python).

[property]

# GPU ID is for the number of GPUs used, default is 0

gpu-id=0

# Scaling images

net-scale-factor=0.0039215697906911373

# Key is the one you get from nvc.nvidia.com, use the same key you used to train the model

tlt-model-key=tlt_encode

# Path to the tlt model

tlt-encoded-model=/path/detectnet_v2_models/detectnet_4K-fddb-12/resnet18_RGB960_detector_fddb_12_int8.etlt

# Path to label file

labelfile-path=labels_masknet.txt

# Incase you converted etlt to GPU engine file (tensorrt) provide that path, this reduces reloading time at starting.

model-engine-file=/path/detectnet_v2_models/detectnet_4K-fddb-12/resnet18_RGB960_detector_fddb_12_int8.etlt_b1_gpu0_int8.engine

# Input dims of the image

input-dims=3;960;544;0

# If model is trained through TLT kit then provide the uff params.

uff-input-blob-name=input_1

batch-size=1

model-color-format=0

## 0=FP32, 1=INT8, 2=FP16 mode

# On jetson Nano use network mode as 2.

network-mode=1

num-detected-classes=2

# There are various cluster method used by internal inference to select the classes read documentation of various clustering modes available

cluster-mode=1

#Interval is equivalent of skip frames

interval=0

# The gie unique id is the main ID which helps in acessing objects of config file which is currently processed.

gie-unique-id=1

# It is used when we are using Classifier after detection

is-classifier=0

classifier-threshold=0.9

# It is important to use same layer output as used when training the model.

output-blob-names=output_bbox/BiasAdd;output_cov/Sigmoid

We can decide threshold per class too

[class-attrs-0]

pre-cluster-threshold=0.3

group-threshold=1

eps=0.5

For more info refer here

While using DeepStream we can choose between 3 types of network mode.

The performance varies with network mode Int8 being the fastest and FP32 being slowest but more accurate but the Jetson nano can not run Int8.

DataToBiz has its expertise in developing state of the art Computer Vision algorithms and inferencing them on edge devices in real-time. For more details contact us

DataToBiz is a Data Science, AI, and BI Consulting Firm that helps Startups, SMBs and Enterprises achieve their future vision of sustainable growth.

DataToBiz is a Data Science, AI, and BI Consulting Firm that helps Startups, SMBs and Enterprises achieve their future vision of sustainable growth.